8. The Illusion of Artificial Intelligence

Why Machines Don’t Replace Humans, They Replace Institutions

I. The Persistent Confusion

Artificial Intelligence is discussed as if it were a mind.

This is the original mistake.

The language gives it away.

We ask whether machines understand.

Whether they are creative.

Whether they have agency.

These questions feel natural.

They are also irrelevant.

They import biological categories into a domain where they no longer apply.

A mind is a living system.

It is coupled to a body.

It learns through risk, fatigue, error, and consequence.

None of this is present here.

When these categories are applied anyway, debate becomes theatrical.

Philosophical arguments pile up.

Demonstrations are mistaken for evidence.

Fluency is mistaken for depth.

Meanwhile, the real shift passes unnoticed.

The transformation underway is not psychological.

It is structural.

What is changing is not how machines think.

It is where thinking is being relocated.

Institutions have always thought without minds.

They decide.

They persist.

They act across time.

That capacity is now being mechanized.

Not loudly.

Not dramatically.

Already complete.

II. Intelligence With Bodies, Intelligence Without Them

Not all intelligence is biological.

Biological intelligence is inseparable from a body.

Perception feeds action.

Action carries risk.

Error has cost.

Learning is metabolic.

It consumes energy.

It leaves traces in tissue.

It cannot be paused or reset without consequence.

This coupling matters.

It sets limits.

It grounds meaning in survival.

Institutional intelligence operates differently.

It does not perceive.

It does not act directly.

It does not bear risk.

It persists.

Rules continue after their authors die.

Procedures function after their designers leave.

Decisions propagate long after the original context dissolves.

No understanding is required at each step.

Only compliance.

This is not a deficiency.

It is the point.

Institutional intelligence exists to stabilize meaning once comprehension stops scaling.

It replaces insight with procedure.

Judgment with form.

Responsibility with role.

A common confusion appears here.

Because institutions are staffed by people, their intelligence is assumed to be an extension of human cognition.

It is not.

The people rotate.

The structure remains.

What persists is not belief or intention.

It is constraint.

This intelligence does not think.

It holds.

And what is now being mechanized is not the intelligence of organisms, but the intelligence of structures that survive them.

III. Why Brain Emulation Is a Dead End

The dominant ambition is imitation.

Neurons are mapped.

Learning curves are plotted.

Emergence is invoked.

The premise is familiar.

If the brain can be approximated closely enough, intelligence will appear.

This framing assumes that intelligence is a pattern that can be detached from the conditions that produce it.

That assumption fails.

Biological intelligence is not only computational.

It is embodied.

A body imposes friction.

Delay.

Irreversibility.

Perception is shaped by movement.

Learning is shaped by injury and fatigue.

Error is shaped by consequence.

Remove these constraints and the system does not generalize.

It drifts.

Embodiment cannot be simulated without cost.

Once cost is removed, behavior loses calibration.

Scaling approximation does not resolve this.

It intensifies it.

Larger models replay patterns more fluently.

They do not acquire stakes.

What looks like thinking is pattern replay under compression.

What looks like reasoning is statistical continuity across contexts.

The outputs cohere because the distributions cohere.

Not because anything is being held accountable.

This is why brain emulation keeps missing its target.

It aims at the surface form of intelligence while removing the conditions that give that form weight.

The result is not a mind without a body.

It is a generator without consequence.

And this clarifies the next distinction that matters.

IV. What These Systems Actually Do

What current systems actually do is simpler.

They generate plausible continuations.

Given a context, they extend it.

Given a pattern, they reproduce its shape.

This behavior is often described as reasoning.

It is not.

It is intuition emulation.

Human intuition operates as fast pattern recognition under pressure.

It proposes.

It does not justify.

Current systems mirror this surface function.

They suggest without committing.

They respond without owning the response.

No memory binds an output to a future state.

No decision fixes an interpretation.

No consequence returns to recalibrate behavior.

Each interaction is fresh.

Each output dissolves once delivered.

This absence is often framed as a defect.

It is not.

It is the condition that makes the system useful.

Without commitment, outputs remain flexible.

Without persistence, errors are cheap.

Without consequence, exploration is unconstrained.

These systems are powerful precisely because they are not cognitive.

They do not carry responsibility forward.

They do not maintain identity across time.

They behave like intuition unburdened by judgment.

Once this is seen, a different question becomes unavoidable.

V. When Structure Replaced Understanding

Institutions solved a different problem.

Not how to think better.

How to continue functioning once thinking no longer scaled.

As systems grew, individual understanding became insufficient.

Too many rules.

Too many dependencies.

Too much temporal reach.

At that point, comprehension ceased to be the stabilizing factor.

Structure took over.

Forms replaced judgment.

Procedures replaced insight.

Roles replaced persons.

Meaning was no longer carried by understanding.

It was carried by compliance.

This substitution was not ideological.

It was operational.

A legal system does not require every participant to grasp its logic.

A financial system does not require belief in its rationale.

A medical system does not require shared intuition across practitioners.

They require adherence.

This is the intelligence that governs modern coordination.

Durable.

Impersonal.

Indifferent to understanding.

It persists because it is symbolic.

It survives because it is procedural.

Artificial systems are entering at this level.

Not as minds.

Not as agents.

As mechanisms that extend symbolic substitution beyond human execution.

Once intelligence is defined this way, the locus of change shifts again.

VI. What Machines Actually Replace

Machines do not replace workers.

They replace offices.

The unit being displaced is not the person.

It is the function.

An office exists to execute procedure.

To route decisions.

To apply rules consistently.

Judgment appears only at the edges.

Most of the work is substitution.

Machines enter cleanly here.

They do not replace judgment.

They replace procedure.

They do not argue.

They apply.

They do not weigh meaning.

They enforce form.

This is often misread as automation of labor.

It is not.

It is automation of coordination.

Intelligence as lived experience remains untouched.

Conversation.

Trust.

Care.

What is displaced is intelligence as structure.

As role.

As repeatable function across time.

This is why disruption concentrates in institutions, not relationships.

And it explains why the next problem is not capability, but trust.

VII. Trust Is a Structural Property

Trust is not confidence.

It is not accuracy.

It is not fluency.

Confidence can be misplaced.

Accuracy can be accidental.

Fluency can deceive.

Trust operates on a different axis.

Trust requires persistence.

What was said must remain what was said.

Decisions must not dissolve after delivery.

Trust requires traceability.

An output must have a lineage.

A reasoned path that can be inspected after the fact.

Trust requires contestability.

Errors must be challengeable.

Corrections must not erase history.

Institutions earn trust this way.

Not by being right in every case.

By being auditable in every case.

A court can be wrong.

A regulator can fail.

A medical protocol can be revised.

What matters is that the decision persists, the rationale can be examined, and responsibility can be located.

Without structure, output is disposable.

It can be ignored, denied, or regenerated.

Without trace, responsibility evaporates.

There is no author, no owner, no point of appeal.

This is the boundary current systems cannot cross on their own.

VIII. What Scale Will Never Fix

The future of AI is not smarter generators.

It is accountable systems.

Generation scales easily.

Fluency improves with data and compute.

None of this resolves responsibility.

The missing capability is not intelligence in the cognitive sense.

It is commitment.

A system that can commit fixes an interpretation.

It accepts that a decision occurred.

It allows that decision to persist.

A system that can be questioned exposes its path.

Not as an explanation performance.

As a reconstructable process.

A system that can fail visibly makes error legible.

Failure becomes inspectable rather than mysterious.

Correction becomes structural rather than cosmetic.

These properties do not emerge from scale.

They must be designed.

This is not a philosophical preference.

It is a structural necessity.

Once artificial systems participate in institutional roles,

their outputs must be governable in institutional terms.

And this shifts the final distinction that remains to be clarified.

IX. Clearing the Category

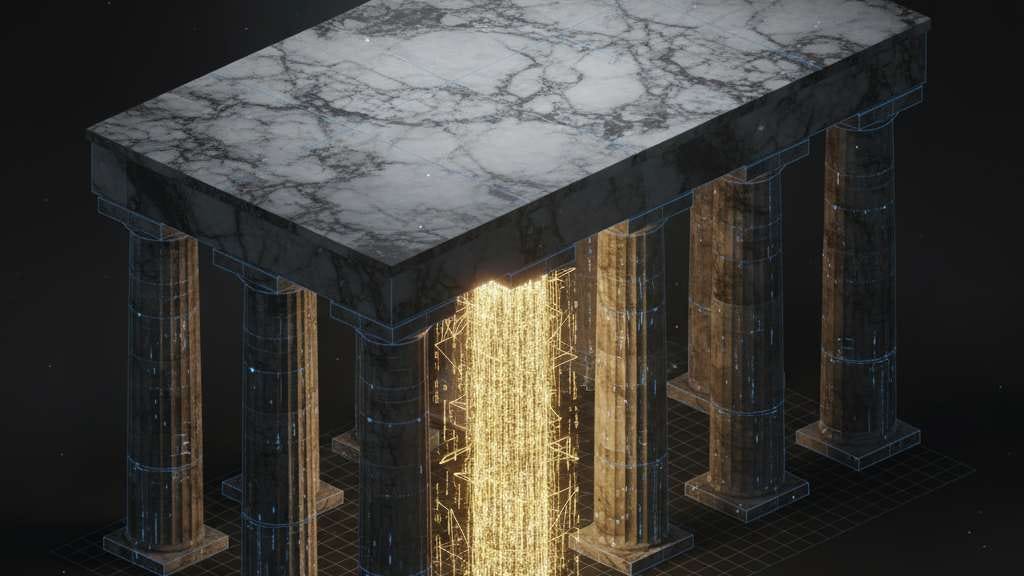

Artificial Intelligence is not artificial life.

It is artificial institution.

This single reclassification dissolves much of the surrounding confusion.

Artificial life would require embodiment.

Risk.

Irreversibility.

A stake in outcomes.

What we are building has none of these.

What we are building executes rules.

It persists decisions.

It coordinates action across time.

That is institutional behavior.

Once this is seen, the debates collapse.

There is no fear of replacement.

Living intelligence is not being competed with.

There is no myth of emergence.

No hidden mind is waiting to appear.

There is no confusion about agency.

Responsibility does not arise from intention here.

It arises from structure.

The relevant questions stop being psychological.

They become architectural.

What commits a decision.

What records it.

What allows it to be challenged.

What governs its revision.

Only architecture remains.

If intelligence can exist without understanding,

governance becomes a design problem rather than a philosophical one.

Reading Context

This article reclassifies contemporary AI as a mechanization of institutional cognition rather than an extension of human intelligence.

It does not argue for a position, forecast outcomes, or assign responsibility.

It examines the conditions under which a certain class of phenomena becomes possible once meaning is externalized, scaled, and no longer regulated by individual human cognition.

The analysis is second-order.

It addresses constraints, not preferences.

The ideas developed here are shaped by work in embodied and enactive cognition, systems theory, semiotics, engineering failure analysis, and institutional theory. These traditions are not treated as authorities, but as sources of constraints that remain valid once scale and persistence are taken seriously.

If the level at which this article operates feels unfamiliar, or if it seems to bypass debates that usually come first, the orientation article How to Read What Follows clarifies the ground on which the series is built.